NGFW Clusters

Table of Contents

Expand All

|

Collapse All

Next-Generation Firewall Docs

-

PAN-OS 11.1 & Later

- PAN-OS 11.1 & Later

- PAN-OS 11.0 (EoL)

- PAN-OS 10.2

- PAN-OS 10.1

- PAN-OS 10.0 (EoL)

- PAN-OS 9.1 (EoL)

- Cloud Management of NGFWs

-

- Management Interfaces

-

- Launch the Web Interface

- Use the Administrator Login Activity Indicators to Detect Account Misuse

- Manage and Monitor Administrative Tasks

- Commit, Validate, and Preview Firewall Configuration Changes

- Commit Selective Configuration Changes

- Export Configuration Table Data

- Use Global Find to Search the Firewall or Panorama Management Server

- Manage Locks for Restricting Configuration Changes

-

-

- Define Access to the Web Interface Tabs

- Provide Granular Access to the Monitor Tab

- Provide Granular Access to the Policy Tab

- Provide Granular Access to the Objects Tab

- Provide Granular Access to the Network Tab

- Provide Granular Access to the Device Tab

- Define User Privacy Settings in the Admin Role Profile

- Restrict Administrator Access to Commit and Validate Functions

- Provide Granular Access to Global Settings

- Provide Granular Access to the Panorama Tab

- Provide Granular Access to Operations Settings

- Panorama Web Interface Access Privileges

-

- Reset the Firewall to Factory Default Settings

-

- Plan Your Authentication Deployment

- Pre-Logon for SAML Authentication

- Configure SAML Authentication

- Configure Kerberos Single Sign-On

- Configure Kerberos Server Authentication

- Configure TACACS+ Authentication

- Configure TACACS Accounting

- Configure RADIUS Authentication

- Configure LDAP Authentication

- Configure Local Database Authentication

- Configure an Authentication Profile and Sequence

- Test Authentication Server Connectivity

- Troubleshoot Authentication Issues

-

- Keys and Certificates

- Default Trusted Certificate Authorities (CAs)

- Certificate Deployment

- Configure the Master Key

- Export a Certificate and Private Key

- Configure a Certificate Profile

- Configure an SSL/TLS Service Profile

- Configure an SSH Service Profile

- Replace the Certificate for Inbound Management Traffic

- Configure the Key Size for SSL Forward Proxy Server Certificates

-

- HA Overview

-

- Prerequisites for Active/Active HA

- Configure Active/Active HA

-

- Use Case: Configure Active/Active HA with Route-Based Redundancy

- Use Case: Configure Active/Active HA with Floating IP Addresses

- Use Case: Configure Active/Active HA with ARP Load-Sharing

- Use Case: Configure Active/Active HA with Floating IP Address Bound to Active-Primary Firewall

- Use Case: Configure Active/Active HA with Source DIPP NAT Using Floating IP Addresses

- Use Case: Configure Separate Source NAT IP Address Pools for Active/Active HA Firewalls

- Use Case: Configure Active/Active HA for ARP Load-Sharing with Destination NAT

- Use Case: Configure Active/Active HA for ARP Load-Sharing with Destination NAT in Layer 3

- HA Clustering Overview

- HA Clustering Best Practices and Provisioning

- Configure HA Clustering

- Refresh HA1 SSH Keys and Configure Key Options

- HA Firewall States

- Reference: HA Synchronization

-

- Use the Dashboard

- Monitor Applications and Threats

- Monitor Block List

-

- Report Types

- View Reports

- Configure the Expiration Period and Run Time for Reports

- Disable Predefined Reports

- Custom Reports

- Generate Custom Reports

- Generate the SaaS Application Usage Report

- Manage PDF Summary Reports

- Generate User/Group Activity Reports

- Manage Report Groups

- Schedule Reports for Email Delivery

- Manage Report Storage Capacity

- View Policy Rule Usage

- Use External Services for Monitoring

- Configure Log Forwarding

- Configure Email Alerts

-

- Configure Syslog Monitoring

-

- Traffic Log Fields

- Threat Log Fields

- URL Filtering Log Fields

- Data Filtering Log Fields

- HIP Match Log Fields

- GlobalProtect Log Fields

- IP-Tag Log Fields

- User-ID Log Fields

- Decryption Log Fields

- Tunnel Inspection Log Fields

- SCTP Log Fields

- Authentication Log Fields

- Config Log Fields

- System Log Fields

- Correlated Events Log Fields

- GTP Log Fields

- Audit Log Fields

- Syslog Severity

- Custom Log/Event Format

- Escape Sequences

- Forward Logs to an HTTP/S Destination

- Firewall Interface Identifiers in SNMP Managers and NetFlow Collectors

- Monitor Transceivers

-

- User-ID Overview

- Enable User-ID

- Map Users to Groups

- Enable User- and Group-Based Policy

- Enable Policy for Users with Multiple Accounts

- Verify the User-ID Configuration

-

- App-ID Overview

- App-ID and HTTP/2 Inspection

- Manage Custom or Unknown Applications

- Safely Enable Applications on Default Ports

- Applications with Implicit Support

-

- Prepare to Deploy App-ID Cloud Engine

- Enable or Disable the App-ID Cloud Engine

- App-ID Cloud Engine Processing and Policy Usage

- New App Viewer (Policy Optimizer)

- Add Apps to an Application Filter with Policy Optimizer

- Add Apps to an Application Group with Policy Optimizer

- Add Apps Directly to a Rule with Policy Optimizer

- Replace an RMA Firewall (ACE)

- Impact of License Expiration or Disabling ACE

- Commit Failure Due to Cloud Content Rollback

- Troubleshoot App-ID Cloud Engine

- Application Level Gateways

- Disable the SIP Application-level Gateway (ALG)

- Maintain Custom Timeouts for Data Center Applications

-

- Decryption Overview

-

- Keys and Certificates for Decryption Policies

- SSL Forward Proxy

- SSL Forward Proxy Decryption Profile

- SSL Inbound Inspection

- SSL Inbound Inspection Decryption Profile

- SSL Protocol Settings Decryption Profile

- SSH Proxy

- SSH Proxy Decryption Profile

- Profile for No Decryption

- SSL Decryption for Elliptical Curve Cryptography (ECC) Certificates

- Perfect Forward Secrecy (PFS) Support for SSL Decryption

- SSL Decryption and Subject Alternative Names (SANs)

- TLSv1.3 Decryption

- High Availability Not Supported for Decrypted Sessions

- Decryption Mirroring

- Configure SSL Forward Proxy

- Configure SSL Inbound Inspection

- Configure SSH Proxy

- Configure Server Certificate Verification for Undecrypted Traffic

- Post-Quantum Cryptography Detection and Control

- Enable Users to Opt Out of SSL Decryption

- Temporarily Disable SSL Decryption

- Configure Decryption Port Mirroring

- Verify Decryption

- Activate Free Licenses for Decryption Features

-

- Policy Types

- Policy Objects

- Track Rules Within a Rulebase

- Enforce Policy Rule Description, Tag, and Audit Comment

- Move or Clone a Policy Rule or Object to a Different Virtual System

-

- External Dynamic List

- Built-in External Dynamic Lists

- Configure the Firewall to Access an External Dynamic List

- Retrieve an External Dynamic List from the Web Server

- View External Dynamic List Entries

- Exclude Entries from an External Dynamic List

- Enforce Policy on an External Dynamic List

- Find External Dynamic Lists That Failed Authentication

- Disable Authentication for an External Dynamic List

- Register IP Addresses and Tags Dynamically

- Use Dynamic User Groups in Policy

- Use Auto-Tagging to Automate Security Actions

- CLI Commands for Dynamic IP Addresses and Tags

- Application Override Policy

- Test Policy Rules

-

- Network Segmentation Using Zones

- How Do Zones Protect the Network?

-

PAN-OS 11.1 & Later

- PAN-OS 11.1 & Later

- PAN-OS 11.0 (EoL)

- PAN-OS 10.2

- PAN-OS 10.1

-

- Tap Interfaces

-

- Layer 2 and Layer 3 Packets over a Virtual Wire

- Port Speeds of Virtual Wire Interfaces

- LLDP over a Virtual Wire

- Aggregated Interfaces for a Virtual Wire

- Virtual Wire Support of High Availability

- Zone Protection for a Virtual Wire Interface

- VLAN-Tagged Traffic

- Virtual Wire Subinterfaces

- Configure Virtual Wires

- Configure a PPPoE Client on a Subinterface

- Configure an IPv6 PPPoE Client

- Configure an Aggregate Interface Group

- Configure Bonjour Reflector for Network Segmentation

- Use Interface Management Profiles to Restrict Access

-

- DHCP Overview

- Firewall as a DHCP Server and Client

- Firewall as a DHCPv6 Client

- DHCP Messages

- Dynamic IPv6 Addressing on the Management Interface

- Configure an Interface as a DHCP Server

- Configure an Interface as a DHCPv4 Client

- Configure an Interface as a DHCPv6 Client with Prefix Delegation

- Configure the Management Interface as a DHCP Client

- Configure the Management Interface for Dynamic IPv6 Address Assignment

- Configure an Interface as a DHCP Relay Agent

-

- DNS Overview

- DNS Proxy Object

- DNS Server Profile

- Multi-Tenant DNS Deployments

- Configure a DNS Proxy Object

- Configure a DNS Server Profile

- Use Case 1: Firewall Requires DNS Resolution

- Use Case 2: ISP Tenant Uses DNS Proxy to Handle DNS Resolution for Security Policies, Reporting, and Services within its Virtual System

- Use Case 3: Firewall Acts as DNS Proxy Between Client and Server

- DNS Proxy Rule and FQDN Matching

-

- NAT Rule Capacities

- Dynamic IP and Port NAT Oversubscription

- Dataplane NAT Memory Statistics

-

- Translate Internal Client IP Addresses to Your Public IP Address (Source DIPP NAT)

- Create a Source NAT Rule with Persistent DIPP

- PAN-OS

- Strata Cloud Manager

- Enable Clients on the Internal Network to Access your Public Servers (Destination U-Turn NAT)

- Enable Bi-Directional Address Translation for Your Public-Facing Servers (Static Source NAT)

- Configure Destination NAT with DNS Rewrite

- Configure Destination NAT Using Dynamic IP Addresses

- Modify the Oversubscription Rate for DIPP NAT

- Reserve Dynamic IP NAT Addresses

- Disable NAT for a Specific Host or Interface

-

- Network Packet Broker Overview

- How Network Packet Broker Works

- Prepare to Deploy Network Packet Broker

- Configure Transparent Bridge Security Chains

- Configure Routed Layer 3 Security Chains

- Network Packet Broker HA Support

- User Interface Changes for Network Packet Broker

- Limitations of Network Packet Broker

- Troubleshoot Network Packet Broker

-

- Enable Advanced Routing

- Logical Router Overview

- Configure a Logical Router

- Create a Static Route

- Configure BGP on an Advanced Routing Engine

- Create BGP Routing Profiles

- Create Filters for the Advanced Routing Engine

- Configure OSPFv2 on an Advanced Routing Engine

- Create OSPF Routing Profiles

- Configure OSPFv3 on an Advanced Routing Engine

- Create OSPFv3 Routing Profiles

- Configure RIPv2 on an Advanced Routing Engine

- Create RIPv2 Routing Profiles

- Create BFD Profiles

- Configure IPv4 Multicast

- Configure MSDP

- Create Multicast Routing Profiles

- Create an IPv4 MRoute

-

-

PAN-OS 11.2

- PAN-OS 11.2

- PAN-OS 11.1

- PAN-OS 11.0 (EoL)

- PAN-OS 10.2

- PAN-OS 10.1

- PAN-OS 10.0 (EoL)

- PAN-OS 9.1 (EoL)

- PAN-OS 9.0 (EoL)

- PAN-OS 8.1 (EoL)

- Cloud Management and AIOps for NGFW

NGFW Clusters

NGFW clustering concepts for PA-7500 Series firewalls.

| Where Can I Use This? | What Do I Need? |

|---|---|

|

One of the following:

|

Learn about NGFW clustering before creating a cluster:

- Benefits and Structure of an NGFW Cluster

- Inter-Firewall Links (IFLs)

- MACsec(PAN-OS 11.1.5 and later releases)

- MC-LAGs, Orphan Ports, and Orphan LAGs

- Node States Determine the Cluster State

- System Faults

- Role of the Leader Node

- Multiple Virtual Systems in an NGFW Cluster(PAN-OS 11.1.7 and later releases)

- Layer 7 Support and Graceful Failover

Benefits and Structure of an NGFW Cluster

Before describing an NGFW cluster, let's review the two legacy HA modes:

- Active/passive (A/P): One firewall actively manages traffic while the other is synchronized to the first firewall (in configuration and states) and is ready to transition to the active state if a failure occurs.

- Active/active (A/A): Both firewalls in the pair are active, process traffic, and work synchronously to handle session setup and session ownership. Both firewalls individually maintain session tables and synchronize to each other. The two firewalls support two separate routing domains.

For the PA-7500 Series firewalls in an NGFW cluster, the control planes (management

planes) of the two firewalls are in active/passive mode where the passive firewall

is fully synchronized to the active firewall in configuration and states.

Additionally, each PA-7500 Series firewall has a maximum of seven data processing

cards (DPC); each DPC has six data planes. The data planes of the two firewalls are

in active/active mode where the firewall with the active management plane

incorporates its own interfaces with all of the interfaces of the passive chassis.

All of the interfaces of both chassis are representable and controllable on a

single, centralized chassis, which is the leader or

leader node

. One node is elected the leader; the other

firewall is a nonleader node. In an NGFW cluster, the firewalls are logically merged

into one logical firewall from the control plane and management perspective. They

have a single routing domain. The NGFW cluster replaces legacy HA pairs and legacy

HA clustering, which are not available on PA-7500 Series firewalls (whether in an

NGFW cluster or not).Use Panorama to configure the PA-7500 Series firewalls for an NGFW cluster. The first

node you assign to the cluster automatically becomes Node 1. After you assign a node

to a cluster, you can't configure the node locally, but must use Panorama. Place all

PA-7500 Series firewalls in a cluster in the same template stack.

NGFW cluster nodes must have the Advanced Routing Engine enabled; they don't support

the legacy routing engine.

Unlike interfaces outside of an NGFW cluster, interfaces on firewalls in a cluster

include the Node ID at the beginning of the interface name. For example,

node1:ethernet2/1 or node2:ethernet1/19.

NGFW clustering is independent of legacy HA pairs and legacy HA clusters. NGFW

clustering is the only HA or clustering solution available on PA-7500 Series

firewalls. However, the firewalls in the NGFW cluster use the Group ID that the

legacy HA uses. The Group ID helps

differentiate MAC addresses when two HA pairs (or an HA pair and an NGFW cluster) in

the same Layer 2 network share MAC addresses. The Group ID is in the same location

within the virtual MAC address for an NGFW firewall node as it is for a legacy HA

firewall node.

Inter-Firewall Links (IFLs)

PA-7500 Series firewalls in an NGFW cluster have a chassis interconnection through

their HSCI interfaces, which function at Layer 2. The firewalls are back-to-back;

HSCI-A ports on each firewall connect to each other, and HSCI-B ports on each

firewall connect to each other. (The firewalls are directly connected to each other;

there can't be a switch or other device between them.) The connections between the

HSCI interfaces are high-bandwidth, inter-firewall links (IFLs) that handle

asymmetric traffic, along with cluster synchronization at a control and dataplane

level. The two IFLs function in active/backup mode. HSCI-A is the default active

link; the active and backup roles are not configurable. If HSCI-A goes down, HSCI-B

becomes the active link.

MACsec

The IFL connection utilizes the HSCI ports on the NGFW exclusively. You can configure

the HSCI-A and HSCI-B for redundancy using an active/backup model.

Beginning with PAN-OS 11.1.5, NGFW clustering allows you to configure Media Access Control Security

(MACsec) on the HSCI interface. MACsec is a standard (802.1AE) that operates at Layer 2. MACsec provides data confidentiality

and integrity between endpoints by adding a security tag and an integrity check

value to each Ethernet frame. It provides confidentiality through encryption of the

data so that only endpoints with the correct encryption key can access the data.

MACsec provides integrity through a cryptographic mechanism, ensuring that no one

has tampered with data in transit. MACsec also provides authentication by ensuring

that only known endpoints can communicate on the Ethernet segment.

You can configure MACsec for the HSCI-A and HSCI-B ports (the active and backup

ports) to protect the Layer 2 connections between the cluster peers. MACsec is

disabled by default; it requires configuration to enable it. (Once enabled, you can

remove the configuration items to disable MACsec.) MACsec runs over each HSCI port.

The firewalls are connected back-to-back, therefore MACsec can establish a tunnel

between the two firewalls. (The firewalls are directly connected to each other;

there can't be a switch or other device between them.) MACsec has an associated

pre-shared key (PSK) that must match on the two HSCI-A ports, and a PSK that must

match on the two HSCI-B ports. Each session confirms that the PSKs match.

On the Communications tab, configuring MACsec involves a field and two profiles for

each node: Key Server Priority (a ranged integer),

Crypto Profile (a drop-down for profiles), and

Pre-Shared Key Profile (a drop-down for profiles).

MC-LAGs, Orphan Ports, and Orphan LAGs

The NGFW cluster supports an MC-LAG, which is a type of LAG. Recall that a LAG (also

known as an aggregated Ethernet (AE) link or aggregate interface group) is a group of

links that appear as one link to provide link redundancy. Links in a LAG that

connect to endpoints on multiple chassis are configured as part of an MC-LAG. The

multiple chassis appear to be a single firewall, providing node redundancy for Layer

3 and virtual wire interfaces only. (Layer 2 does not support MC-LAGs.)

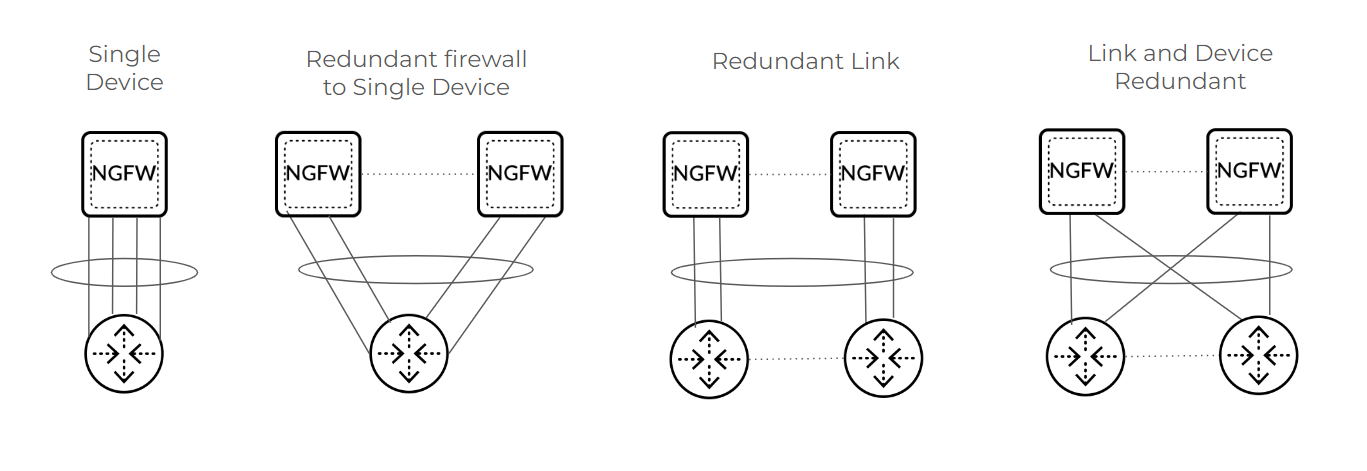

An MC-LAG is an AE interface group that has members spread across both firewalls

(also referred to as nodes or chassis). The illustration below represents different

MC-LAG scenarios. MC-LAGs are controlled by a single control plane and seen as a

single system with a backup management plane. MC-LAG supports redundancy in the

event of link failure, card failure, or chassis failure. Each MC-LAG supports a

maximum of eight members. A pair of firewalls in an NGFW cluster support a maximum

of 64 AE interface groups; the AE interface groups support Layer 3 within the

cluster. (You can have an AE interface group using Layer 2 on a single device, but

not an AE interface group using Layer 2 on an MC-LAG in the cluster.)

Even a single device scenario can use an MC-LAG, which protects the firewall against

failure, but does not protect against failure of the connected third-party

device.

An orphan port is a single Layer 2, Layer 3, or virtual wire link used for connection

from one node. Instead of using a floating IP address, an orphan port has its own IP

address. The first packet of a session determines the dataplane that owns that

session flow. Return data take the reverse path over the same hops back to the

source.

An orphan LAG is an AE interface group that has all members originating or

terminating on a single firewall (similar to a standalone AE interface group). The

single device in the graphic illustrates an example of an orphan LAG. Firewalls

supported orphan ports and orphan LAGs prior to the introduction of NGFW

clustering.

Aggregate Ethernet local bias is a behavior where traffic forwarding prefers a local

member over remote ports. Because an MC-LAG has members on both nodes, local bias

enforces traffic egress from the local node, instead of forwarding traffic over an

HSCI link to the remote node. (The typical behavior of a LAG is to hash to forward

traffic to any members.)

Node States Determine the Cluster State

The combined node states of the nodes in an NGFW cluster determine the cluster state.

First, let's consider the state of a cluster node, which can be one of these

states:

- UNKNOWN—Clustering isn’t enabled. The node remains in this state until a cluster configuration push from Panorama or a commit enables clustering.

- INIT—Node transitions from UNKNOWN to INIT state after clustering is enabled. Node remains in INIT state until cluster initialization of node is complete. The node transitions to ONLINE state if INIT criteria are met. If INIT criteria fail to be met, the node transitions to ONLINE state after a timeout.

- ONLINE—Node is passing traffic and working as expected.

- DEGRADED—Node transitions to DEGRADED state when a soft fault occurs. DEGRADED state allows Layer 7 continuity for sessions that the DEGRADED state device owns. Traffic links are down in DEGRADED state. The node can transition from DEGRADED to INIT state if all the faults are resolved.

- FAILED—Node transitions to FAILED state when a hard fault occurs. FAILED state has traffic ports down and does not allow Layer 7 continuity. The node can transition from FAILED to INIT state if all the faults are resolved.

- SUSPENDED—Triggered by administrator. Another cause of SUSPENDED state is if a node state flaps to DEGRADED or FAILED state repeatedly; the node is SUSPENDED after six flaps. An administrator can unsuspend the node. SUSPENDED state has traffic ports down and does not allow Layer 7 continuity.

Because the collective states of the nodes in an NGFW cluster determine the cluster

state, the cluster state will be:

- OK—If all nodes are in ONLINE state.

- IMPACTED—If at least one node is in ONLINE state and another node isn't in ONLINE state.

- ERROR—If there isn't a single node in ONLINE state.

The System Monitoring portion of the NGFW cluster configuration allows you to specify

the minimum number of network cards and data processing cards that must be

functional. If the node drops below that minimum, the node state transitions to

DEGRADED or FAILED (whichever you configured).

System Faults

Soft faults and hard faults affect whether the node state is DEGRADED or FAILED. Soft

faults result in a node state of DEGRADED; hard faults result in a node state of

FAILED. Causes of a soft fault are:

- ID Map synchronization has failed.

- IPSec VPN security association (SA) synchronization has failed.

- The system reported an out-of-memory (OOM) fault.

- Chassis does not have minimum capacity and degraded state is configured.

- The cluster node is in degraded state waiting to be suspended.

Causes of a hard fault are:

- Cluster infrastructure service has failed.

- The system reported a disk fault.

- The chassis has no DPC slots up.

- FIB synchronization has failed.

- The chassis does not have minimum capacity and failed state is configured.

- Cluster node configuration is incompatible with other nodes.

- Cluster messaging service has failed.

- Cluster node is avoiding a split brain.

- Cluster node is recovering from a split brain.

Role of the Leader Node

As mentioned, one node of the NGFW cluster is elected the leader node, and the other

node is a nonleader node. The leader and nonleader nodes synchronize the following

system runtime information:

- Management Plane:

- User to Group Mappings

- User to IP Address Mappings

- DHCP Lease (as server)

- Forwarding Information Base (FIB)

- PAN-DB URL Cache

- Content (manual sync)

- PPPoE and PPPoE Lease

- DHCP Client Settings and Lease

- SSL VPN Logged in User List

- Dataplane:

- ARP Table

- Neighbor Discovery (ND) Table

- MAC Table

- IPSec SAs [security associations] (phase 2)

- IPSec Sequence Number (anti-replay)

- Virtual MAC

Upon a leader node failover, the following protocols and functions are

renegotiated:

- BGP

- OSPF

- OSPFv3

- RIP

- PIM

- BFD

- DHCP client

- PPPoE client

- Static route path monitor

Multiple Virtual Systems in an NGFW Cluster

To efficiently use firewalls in a cluster, customers need the cluster to support

multiple Virtual Systems, which are an important capability of an NGFW. Beginning with PAN-OS 11.1.7, an

NGFW cluster supports multiple virtual systems (multi-VSYS). An NGFW node (and

therefore the cluster) supports a maximum of 25 virtual systems. Configure the

PA-7500 Series firewalls in the cluster with the same multi-VSYS support (either

enabled or disabled). The cluster does not support inter-VSYS traffic or shared gateways.

Layer 7 Support and Graceful Failover

In general, NGFW clustering aims to provide parity with HA regarding Layer 7 support.

The following table lists Layer 7 features and whether a standalone PA-7500 Series

firewall supports them, whether NGFW cluster nodes support them, and whether they

support graceful failover.

| Layer 7 Feature | Standalone PA-7500 Series Firewall | NGFW Cluster Node | Graceful Failover |

|---|---|---|---|

| Proxy | |||

| Decryption (Forward Proxy/Inbound Inspection) | Yes | Yes | No |

| Hardware Security Module (HSM) | Yes | Yes | N/A (HSM must be configured per node) |

| Certificate Revocation (CRL/OCSP) | Yes | Yes | Service Route |

| Decryption Port Mirror | Yes | Yes | N/A |

| Network Packet Broker | Yes | Yes, but no redundancy support | No |

| SSH Decryption | Yes | Yes | No |

| GlobalProtect™—IPSec Tunnel | Yes | Yes | No |

| GlobalProtect—SSLVPN Tunnel | Yes | Yes | No |

| Clientless SSLVPN | Yes | No | No |

| LSVPN (Satellite) | Yes | Yes | Yes |

| IDmgr | Yes | Yes | Yes |

| Content Threat Detection (CTD) | |||

| Application-Level Gateway (ALG) | Yes | Yes | No |

| dnsproxy | Yes | Yes | No |

| varrcvr | Yes | Yes | No |

| threat detection | Yes | Yes | No |

| Advance Data Loss Prevention (DLP) | Yes | Yes | No |

| URL Filtering | Yes | Yes | No |

| WIF features | Yes | Yes | No |

| App-ID Cloud Engine (ACE) | Yes | Yes | No |

| User-ID and Configuration | |||

| User Identification | Yes | Yes | Yes |

| Device Identification | Yes | Yes | Yes |

| CUID | Yes | Yes | Yes |

| Device Quarantine | Yes | Yes | Yes |

| Dynamic Address Group (DAG) | Yes | Yes | Yes |

| DUG | Yes | Yes | Yes |

ID Map Sync—Management planes of NGFW cluster nodes individually generate IDs for

various types of objects (such as IP address objects and security profile objects)

in a firewall configuration. These are known as Management Global IDs. Each node

maps its own set of IDs with the set of IDs of the peer cluster node. Some IDs are

used in session flow data. Upon a chassis failover, Layer 4 sessions are marked as

orphaned and sent to the peer chassis for continued processing. During this process,

the IDs in the session flow data are updated to match the set of IDs that the peer's

management plane provided. NGFW cluster nodes must complete their ID map

synchronization before leaving INIT state.

Additionally, Panorama generates a subset of IDs (zone, logical interface, and

virtual router IDs) and pushes them to each cluster node. These are Cluster Global

IDs.

If you need to migrate from a non-PA-7500 Series firewall,

proceed to Migrate to NGFW Clustering.

If you're using a PA-7500 Series firewall, proceed to Configure an NGFW Cluster

and then view the NGFW Cluster Summary and Monitoring information.